it's a layered problem

Building an AI product with strong adoption and retention requires work on the wrapper, i.e. the app layer.

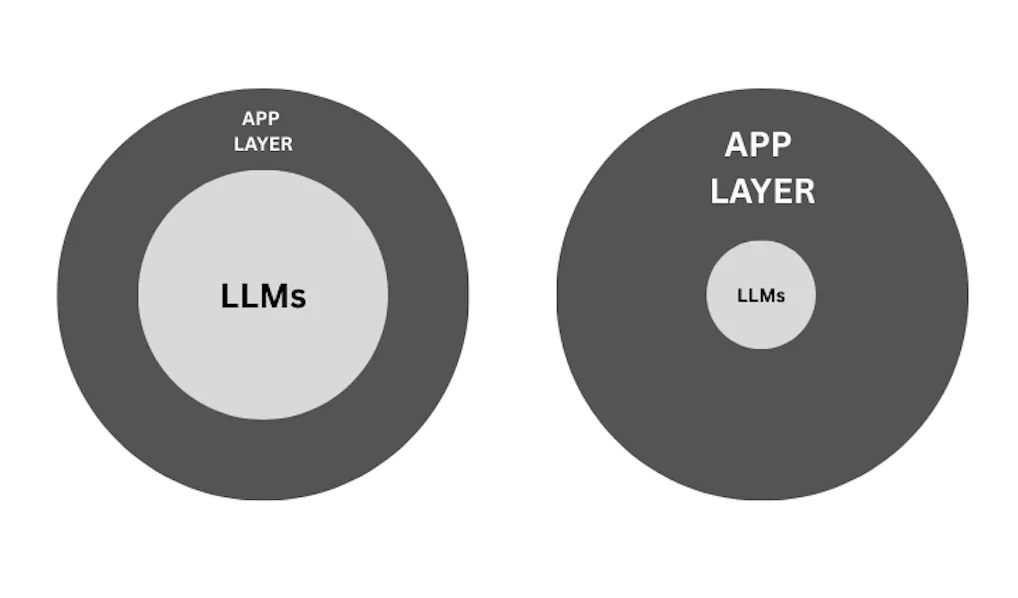

The image on the left represents an AI product with a simple interface that interacts with industry-grade LLMs via straightforward API calls.

The image on the right represents an AI product that dilutes its dependence on the API calls to LLMs, i.e. a product that's built by expending time and money on the app layer. The app layer consists of evals, fallbacks, dynamic UX, context-retrieval, personalization, etc. Here are some questions to think about while expanding your app layer.

- How can I gather feedback on LLM outputs from my users in a non-invasive manner?

- How can I embed data in my context layer to improve precision and recall?

- How can I evaluate LLM responses to reduce hallucination rates?

- How can I gather maximum context from my user with minimal effort?

- How can I optimize my prompt to ensure it accurately fulfills the intended goal of the user?

- How can I design fallback UX given that LLMs are non-deterministic?

- How can I personalize responses for each user?

Diluting dependence on the reasoning model is key. Reasoning models will continue to improve and products built on top of them will inevitably ride the wave. However, breakthroughs in the underlying technology are not necessarily proxies for improvements in your product. AI-native products are only as good as the app layer, not the underlying model.